Gemini 3.1 Flash Lite: Faster and more efficient AI model

Artificial intelligence models are evolving quickly to meet the growing demands of developers, businesses, and digital platforms. Gemini 3.1 Flash Lite represents another significant step in the development of next-generation AI. It's a lightweight model designed for fast responsiveness, efficient processing, and scalability for practical applications.

The Flash Lite version is specifically designed for environments requiring speed, performance, and cost control. Many large AI models focus on advanced reasoning capabilities, while smaller models prioritize balancing performance with minimal computing resource usage.

This concept makes the lightweight model ideally suited for large applications, mobile platforms, and services that require real-time responsiveness.

With architectural improvements and optimizations, this new model enables developers to build intelligent, responsive applications while maintaining high-quality results.

What is Gemini 3.1 Flash Lite?

Gemini 3.1 Flash Lite is a high-performance multimodal model optimized for speed and cost-effectiveness.

In the Gemini ecosystem,

- Gemini 3.1 Pro Designed for tasks requiring in-depth reasoning

- Gemini 3 Flash Designed to create a balance between efficiency and capability

- Gemini 3.1 Flash Lite serves as the "Production Engine" for the system

Despite its "Lite" name, this model is not simply a reduced-performance version of its predecessor. It's built on the same core architecture as the larger models in the same family and optimized for exceptionally fast processing.

Technical specifications

- Context Window: 1 million tokens (Input) / 64k tokens (Output)

- Supported data types: Text, images, audio, video, and PDF.

- Knowledge Cutoff: January 2025

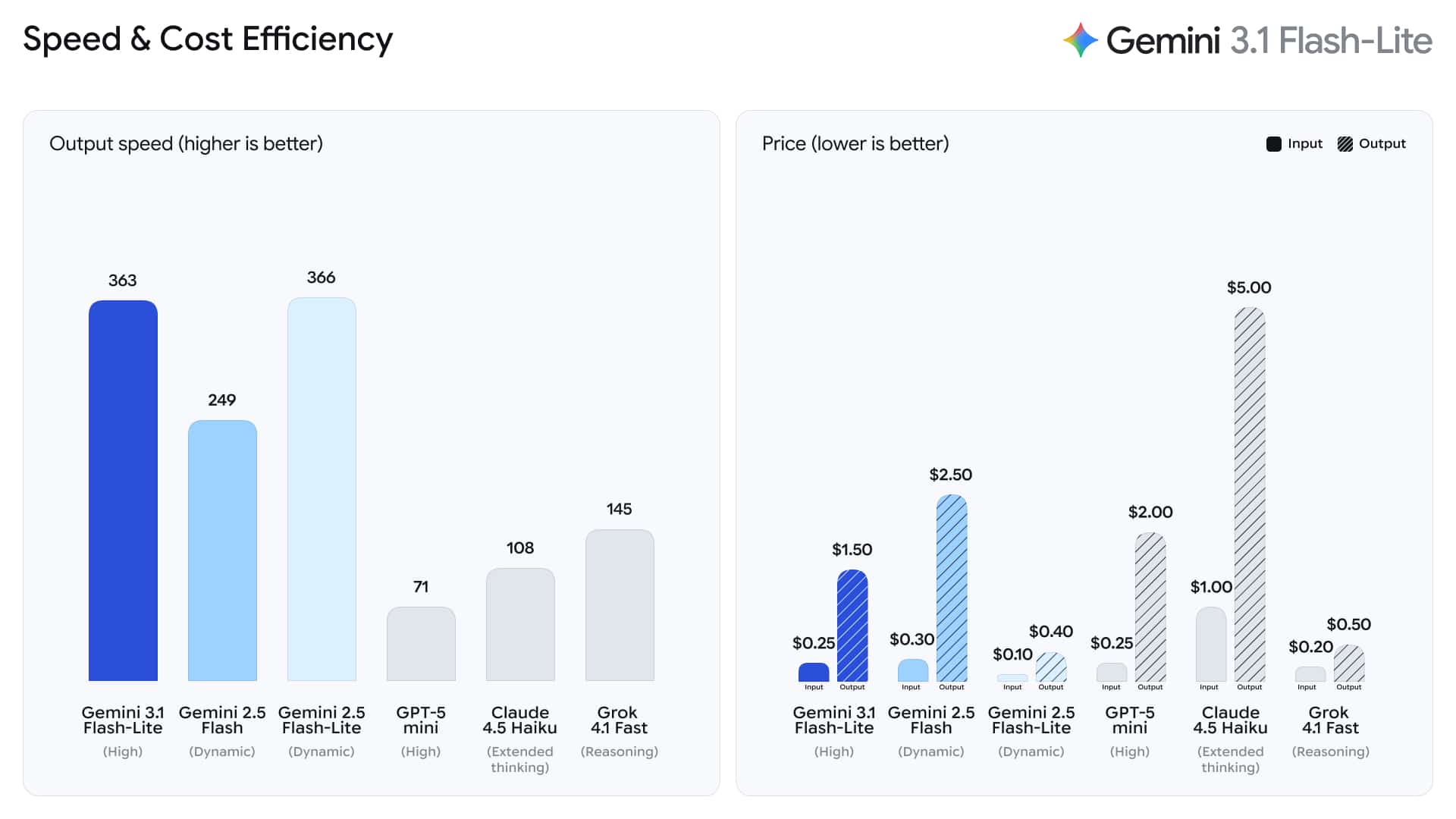

- Speed of result generation: Approximately 363 tokens per second.

What makes Gemini 3.1 different?

Gemini 3.1 introduces a new approach to lightweight AI models by combining higher processing speeds with improved language understanding capabilities.

This model is designed to support a wide variety of AI tasks used in daily life, while maintaining optimal performance for high-traffic systems.

Typical tasks that the model supports include:

- Conversational AI system and chat interface

- Summarizing content and creating text

- Data retrieval and data classification

- Automated customer answering system

- Assisting with coding and development workflows

By prioritizing performance without compromising core capabilities, this model is a reliable choice for developers looking to build scalable AI systems.

Key features of Gemini 3.1 Flash Lite

The Flash Lite version features several improvements that make it suitable for modern digital systems.

High-speed performance

Speed is one of the key characteristics of lightweight AI models. Applications such as chatbots, product recommendation systems, and real-time data analytics require rapid response times to provide a good user experience.

This model is optimized for low latency, allowing applications to respond quickly even when handling a large number of requests simultaneously.

Feature | Benefit |

Optimized inference engine | Faster response time |

Lightweight architecture | Uses fewer computing resources |

Scalable performance | Capable of supporting a large number of users |

These improvements enable organizations to effectively deploy AI systems, even in high-traffic environments.

Cost-effective use of AI

One of the major challenges in implementing AI is the cost of infrastructure and operations.

Lightweight models are designed to reduce processing load, allowing for more efficient use of resources.

Benefits that the organization receives include:

- Support more users with fewer resources

- Reduce cloud infrastructure costs

- Expanding AI services has become easier

For startups and growing platforms, cost efficiency is often a key factor in selecting an AI model for real-world systems.

The ability to perform multiple tasks

Despite being a lightweight model, it supports a wide variety of tasks. The model's architecture allows it to process requests related to multiple languages while maintaining consistent performance.

Developers can use this model in multiple scenarios within the same application.

Examples of usage include:

- Creating product descriptions in an e-commerce system

- Summarizing a long article or document

- Automated customer response

- Extracting structured data from text

This flexibility makes the model suitable for both business and consumer applications.

Price: A new industry standard

One of the most significant changes for the Gemini 3.1 Flash Lite is its pricing structure. By 2026, this model is considered one of the most cost-effective frontier models on the market.

Token Type | Price (per 1 Million Tokens) |

Input Tokens | $0.25 |

Output Tokens | $1.50 |

Batch API | $0.125 (ส่วนลด 50%) |

For organizations migrating from Gemini 1.5 or Gemini 2.5 Flash, this pricing structure significantly reduces operating costs while retaining the unique Multimodal Intelligence capabilities of the Gemini family.

Gemini 3.1 Flash Lite compared to competing models

Compared to other smaller models such as the GPT-5 mini or Claude 4.5 Haiku, the Gemini 3.1 Flash Lite still has a significant advantage: a context window of 1 million tokens.

Most models in the same price range tend to have a much smaller context window, making this model ideal for tasks that require analyzing large amounts of data, such as:

- Long document analysis

- Inspecting the code of the entire project

- Analyzing large amounts of data at once

This advantage reduces the need for complex Retrieval-Augmented Generation (RAG) systems, making AI application development easier and more efficient.

Real-world usage examples

AI models like this are being used in numerous digital services across a wide range of industries.

Customer service platform

The company can create an intelligent chat system that answers customer questions instantly, reducing the workload of the support team and increasing the speed of service.

Content platform

Content publishers and media companies can use AI to...

- Article Summary

- Create a headline

- Helps in the editorial process

software development

Developers can integrate AI capabilities into applications to...

- Introduction to coding

- Create code documentation

- Please check and fix system errors

Automation in business

AI systems can analyze documents, extract key information, and automate repetitive tasks, helping to improve the efficiency of teams within an organization.

The future of powerful AI systems

The role of Gemini 3.1 in AI development

As AI technology continues to advance, developers often create systems that use multiple models together to handle various types of tasks.

Gemini 3.1 plays a crucial role in this ecosystem, as it provides a fast and efficient option for tasks requiring frequent and rapid responses.

Future developments may include:

- Enhanced multimode capabilities

- Improving reasoning efficiency in small models

- Improved integration with cloud-based development platforms

- Advanced tools for developing AI-driven applications

These advancements will help expand the role of AI in digital products and services in daily life.

Summary

Artificial intelligence is becoming a fundamental technology for modern applications. As demand increases, developers need AI models that are not only powerful but also efficient and scalable.

The launch of Gemini 3.1 Flash Lite demonstrates the trend in AI development that focuses on performance, speed, and practicality.

By combining fast response speed, language understanding capabilities, and cost-effectiveness, this model is a suitable choice for building large-scale AI applications.

In the future, as lightweight AI models become more developed, intelligent technology will be accessible across a wider range of industries, devices, and platforms.

Interested in Microsoft products and services? Send us a message here.

Explore our digital tools

If you are interested in implementing a knowledge management system in your organization, contact SeedKM for more information on enterprise knowledge management systems, or explore other products such as Jarviz for online timekeeping, OPTIMISTIC for workforce management. HRM-Payroll, Veracity for digital document signing, and CloudAccount for online accounting.

Read more articles about knowledge management systems and other management tools at Fusionsol Blog, IP Phone Blog, Chat Framework Blog, and OpenAI Blog.

New Gemini Tools For Educators: Empowering Teaching with AI

If you want to keep up with the latest trending technology and AI news every day, check out this website . . There are new updates every day to keep up with!

Fusionsol Blog in Vietnamese

- What is Microsoft 365?

- What is Copilot?What is Copilot?

- Sell Goods AI

- What is Power BI?

- What is Chatbot?

- What is cloud storage?

Related Articles

Frequently Asked Questions (FAQ)

What is Microsoft Copilot?

Microsoft Copilot is an AI-powered assistant feature that helps you work within Microsoft 365 apps like Word, Excel, PowerPoint, Outlook, and Teams by summarizing, writing, analyzing, and organizing information.

Which apps does Copilot work with?

Copilot currently supports Microsoft Word, Excel, PowerPoint, Outlook, Teams, OneNote, and others in the Microsoft 365 family.

Do I need an internet connection to use Copilot?

An internet connection is required as Copilot works with cloud-based AI models to provide accurate and up-to-date results.

How can I use Copilot to help me write documents or emails?

Users can type commands like “summarize report in one paragraph” or “write formal email response to client” and Copilot will generate the message accordingly.

Is Copilot safe for personal data?

Yes, Copilot is designed with security and privacy in mind. User data is never used to train AI models, and access rights are strictly controlled.