ChatGPT Trusted Contact: A new layer of security for ChatGPT users

As AI plays an increasingly significant role in daily life, people are no longer using AI solely for work or research, but also for emotionally sensitive personal conversations. Many users are turning to ChatGPT to reflect on difficult feelings, explore personal questions, or seek advice during stressful times.

Recognizing the increasing role of AI in emotionally sensitive conversations, OpenAI has launched a new optional safety feature called Trusted Contact. This feature is designed to help users connect with trusted individuals during times of intense emotional distress or at risk of self-harm.

Trusted Contact represents another step in OpenAI's overall effort to create an AI system that supports human well-being, promotes connection with people in the real world, and provides access to expert help when needed.

What is ChatGPT Trusted Contact?

Trusted Contact is an optional feature for adult ChatGPT users. Users can specify trusted individuals, such as family members, friends, or caregivers, who may receive alerts if OpenAI's security system detects signs of a conversation indicating a serious risk of self-harm.

The goal of this feature is not to replace professional care or emergency services, but to enhance the “Human Connection,” which mental health professionals consider one of the most important factors in risk prevention.

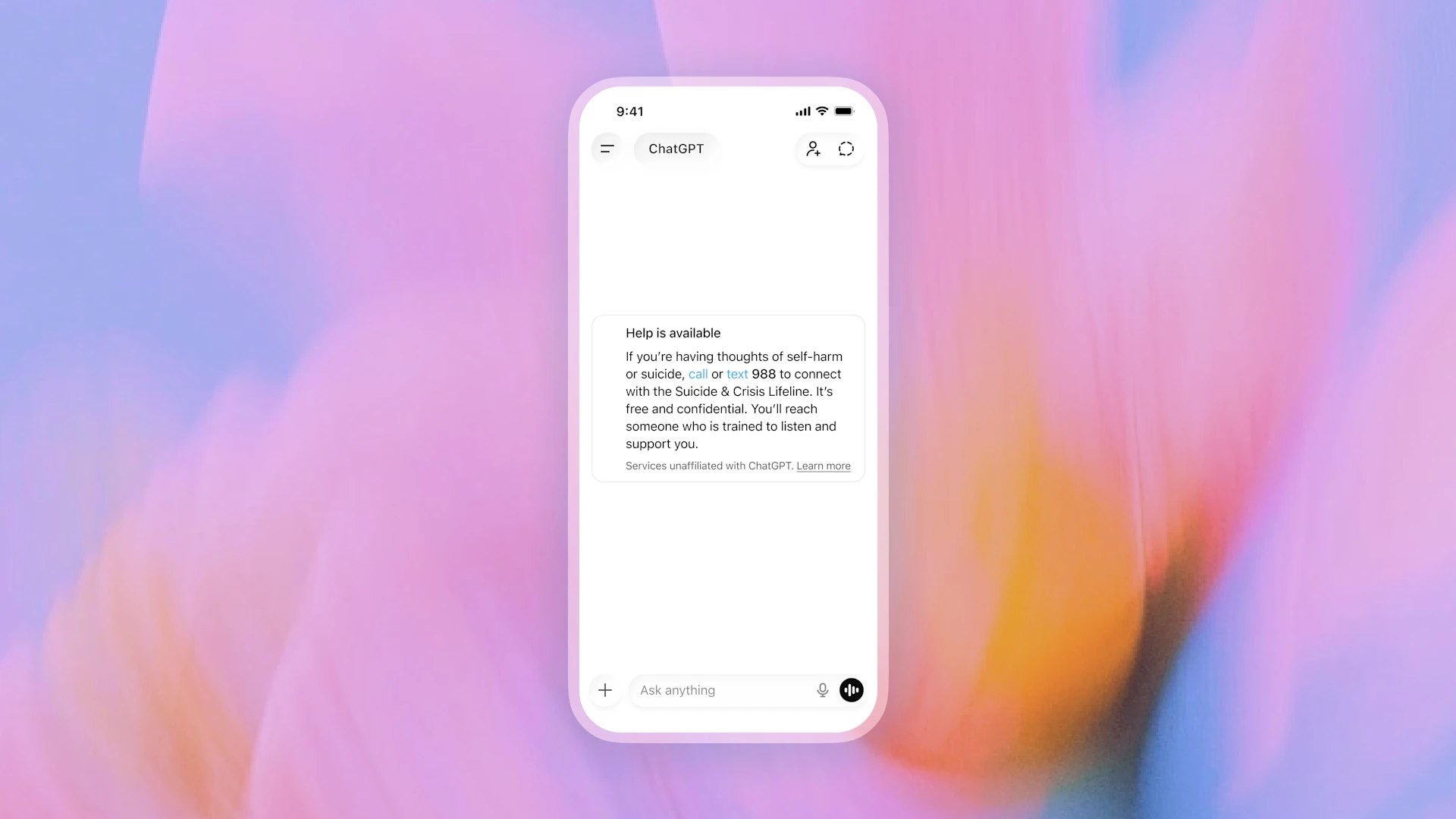

OpenAI emphasizes that Trusted Contact will work in conjunction with other existing security systems within ChatGPT, such as suggesting country-specific help hotlines and guidelines for responding to conversations with a focus on security.

This new system builds upon the safety notification feature for youth accounts linked to parents, which was previously only available for Teen Accounts. Trusted Contact extends this safety approach to adult users as well.

How does ChatGPT Trusted Contact work?

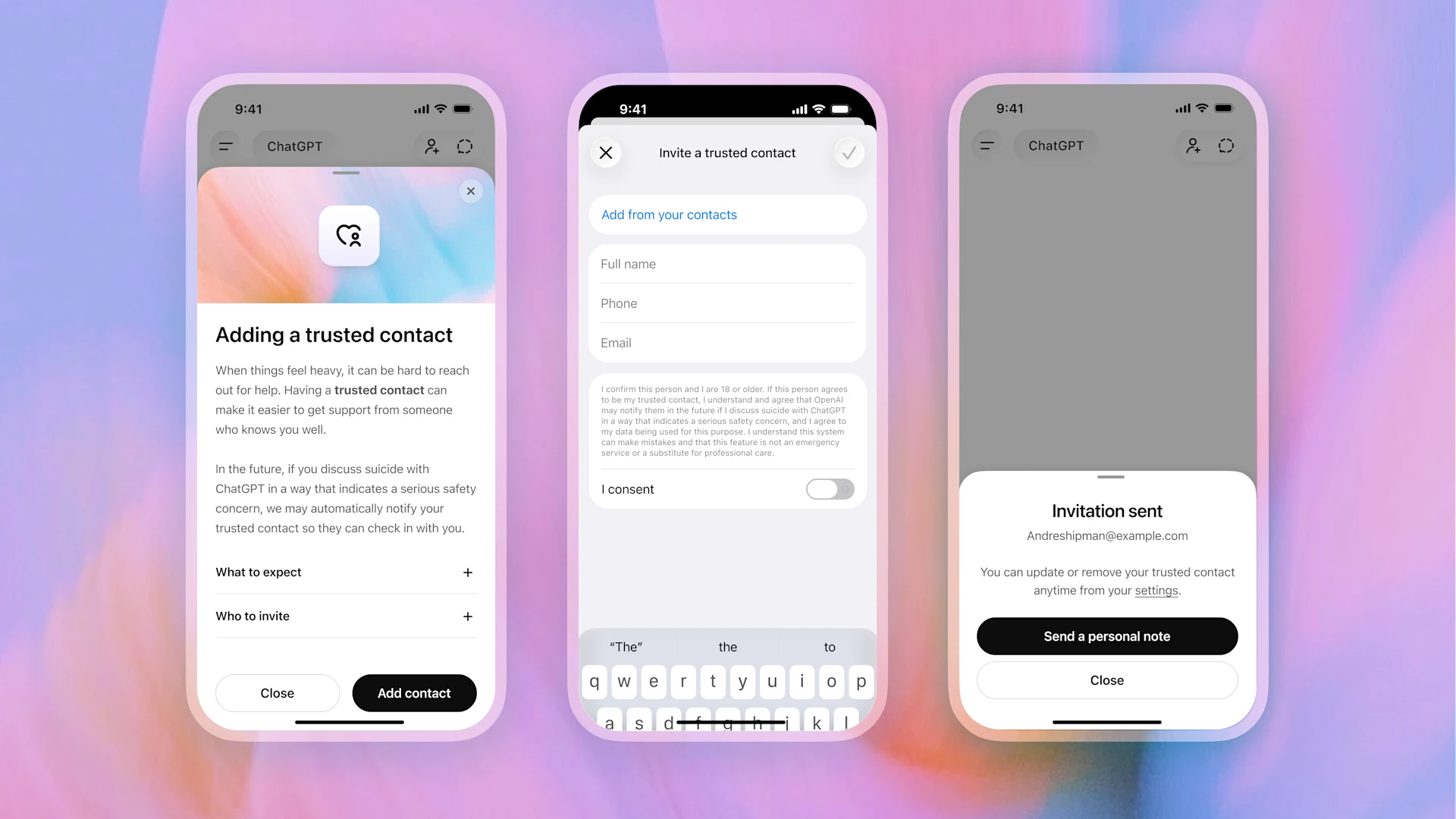

Users can add Trusted Contacts directly through their ChatGPT account settings page. The added person must accept the invitation before the feature will activate.

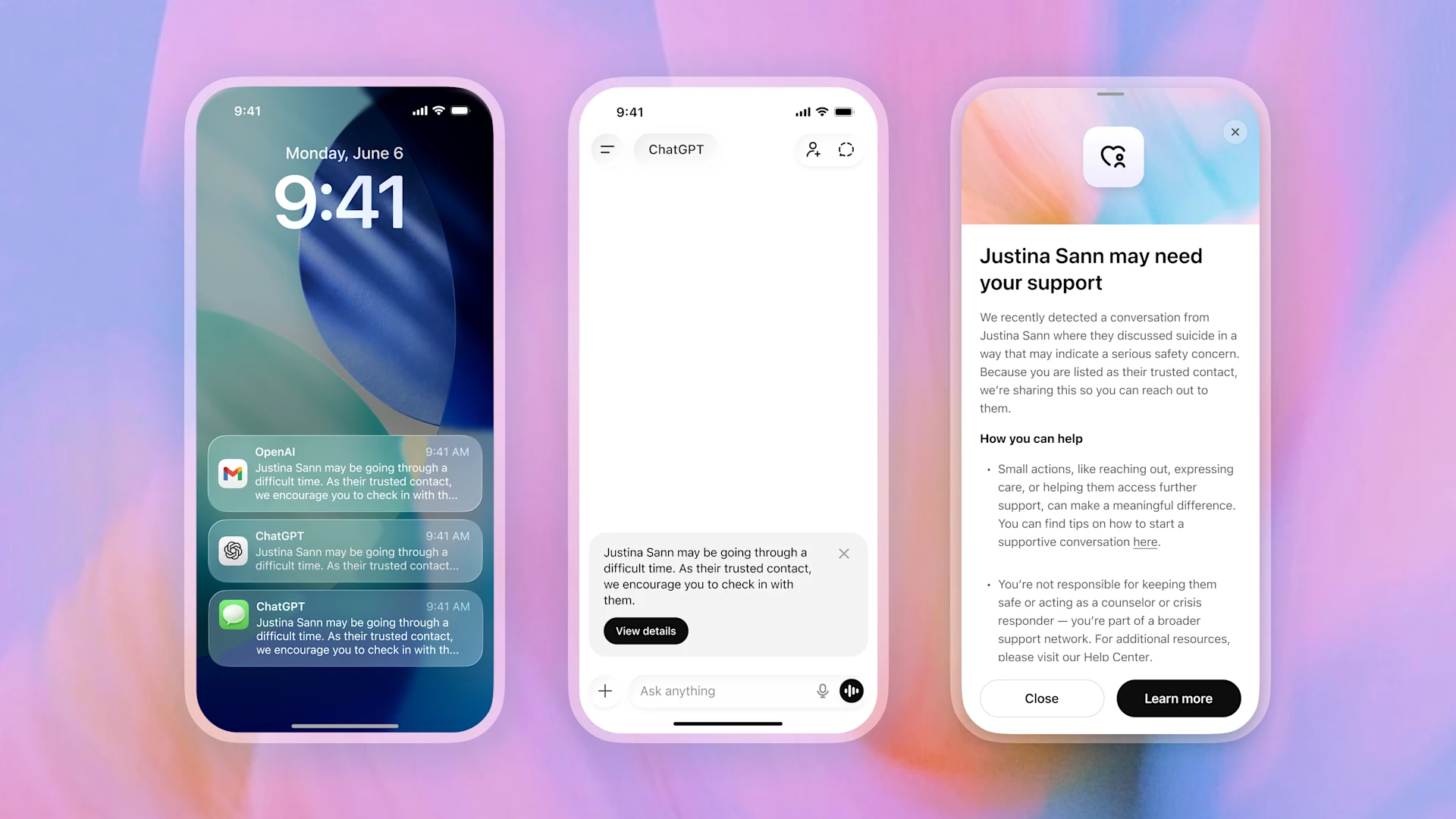

If OpenAI's automatic detection system later detects that a conversation may involve a serious risk of self-harm, ChatGPT will notify the user that their Trusted Contact may receive an alert and encourage the user to contact the individual directly. The system may also suggest ways to initiate a conversation to facilitate communication.

Before any alerts are sent, the entire situation will be reviewed by a specially trained Human Safety team, and an alert will only be sent to a Trusted Contact if the reviewing team deems the situation to pose a serious safety risk.

The notification itself is intentionally designed to contain limited information. It will not send a transcript of the conversation or detailed conversation history to the Trusted Contact, but will only provide general information that a conversation may involve a risk of self-harm and recommend that the Trusted Contact speak with the user.

Notifications may be sent via:

- SMS message

- In-app notifications for ChatGPT users

OpenAI states that users can delete or change Trusted Contacts at any time, and Trusted Contacts can also choose to unsubscribe from notifications at any time.

Balancing Security and Privacy

One of the most important aspects of Trusted Contact is balancing “user security” with “privacy protection”.

OpenAI specifically designed this system to prevent the sharing of private conversation details with trusted contacts, even in serious situations. The system will avoid disclosing chat content or sensitive personal information.

The company further explained that every alert undergoes review by a team of trained Human Reviewers before being sent out. The purpose is to reduce false alarms and ensure that alerts are handled carefully and responsibly.

While OpenAI acknowledges that no security system is perfect, the company states that it aims to review serious security alerts within approximately one hour to help enable a timely response in critical situations.

Created based on recommendations from mental health experts

Trusted Contact was developed with guidance from doctors, researchers, and organizations specializing in mental health and suicide prevention.

OpenAI states that this project received supporting data from:

- The Global Physicians Network comprises more than 260 licensed physicians from over 60 countries.

- Expert Council on Well-Being and AI

- External organizations, such as the American Psychological Association.

This collaboration reflects OpenAI's approach of prioritizing the design of AI security systems based on real-world clinical expertise, not just on technical developments.

Enhancing security in ChatGPT

Trusted Contact is part of an overall security strategy aimed at developing ways to handle emotionally sensitive conversations within ChatGPT.

OpenAI continues to develop its system to enable it to:

- Detect signs of stress or emotional distress.

- Respond with supportive language that helps reduce tension.

- Introducing real-world resources for help.

- Reject requests related to self-harm.

The company stated that it worked with more than 170 mental health professionals to develop guidelines for managing difficult and sensitive conversations within ChatGPT.

In some situations, ChatGPT may suggest to the user:

- Contact emergency services or the crisis hotline.

- Contact someone you trust.

- Disable the device after prolonged continuous use.

- Seek advice from a mental health professional.

In addition, ChatGPT is designed to reject requests related to suicide methods or self-harm, redirecting users to safer responses and emergency assistance resources in their area instead.

AI should support human relationships, not replace them

One of the key concepts behind Trusted Contact is that AI should not be used in isolation from real-world human relationships.

As AI plays an increasingly significant role in daily life, OpenAI believes that AI systems should help connect people back to real-world support networks, rather than becoming proxys for human care. Trusted Contact is therefore designed to encourage users to remain connected with people they trust during vulnerable times.

This approach also reflects a shift in AI companies' perspective on responsibility. Security no longer simply means preventing harmful content, but also includes designing systems that can recognize emotional distress and help people access meaningful human support.

Summary

The launch of Trusted Contact marks another significant step in designing security systems for AI. Moving beyond solely focusing on content control, OpenAI is developing systems that support user well-being while maintaining strong privacy.

As conversational AI plays an increasingly significant role in the personal and emotional aspects of life, features like Trusted Contact could become a crucial element in enabling AI systems to respond more responsibly to sensitive situations.

The key message OpenAI wants to convey is clear: AI should not replace human relationships or expert care, but can play a role in helping people reconnect with the most important support systems in their lives.

Interested in Microsoft products and services? Send us a message here.

Explore our digital tools

If you are interested in implementing a knowledge management system in your organization, contact SeedKM for more information on enterprise knowledge management systems, or explore other products such as Jarviz for online timekeeping, OPTIMISTIC for workforce management. HRM-Payroll, Veracity for digital document signing, and CloudAccount for online accounting.

Read more articles about knowledge management systems and other management tools at Fusionsol Blog, IP Phone Blog, Chat Framework Blog, and OpenAI Blog.

New Gemini Tools For Educators: Empowering Teaching with AI

If you want to stay up-to-date with the latest technology and AI news, check out this website It's updated daily!

Fusionsol Blog in Vietnamese

- What is Microsoft 365?

- What is Copilot?What is Copilot?

- Sell Goods AI

- What is Power BI?

- What is Chatbot?

- What is cloud storage?

Related Articles

Frequently Asked Questions (FAQ)

What is Microsoft Copilot?

Microsoft Copilot is an AI-powered assistant feature that helps you work within Microsoft 365 apps like Word, Excel, PowerPoint, Outlook, and Teams by summarizing, writing, analyzing, and organizing information.

Which apps does Copilot work with?

Copilot currently supports Microsoft Word, Excel, PowerPoint, Outlook, Teams, OneNote, and others in the Microsoft 365 family.

Do I need an internet connection to use Copilot?

An internet connection is required as Copilot works with cloud-based AI models to provide accurate and up-to-date results.

How can I use Copilot to help me write documents or emails?

Users can type commands like “summarize report in one paragraph” or “write formal email response to client” and Copilot will generate the message accordingly.

Is Copilot safe for personal data?

Yes, Copilot is designed with security and privacy in mind. User data is never used to train AI models, and access rights are strictly controlled.